Implementing a full life cycle Model development, Governance & Approval Workflow¶

Overview

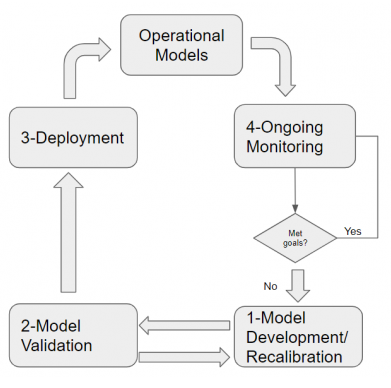

Model Management is a closed-loop process involving model development/calibration, model validation, deployment & model monitoring. The overall process requires close coordination among the model development, validation, production and monitoring teams at the bank with robust controls & well-defined interfaces between teams.

Model Development

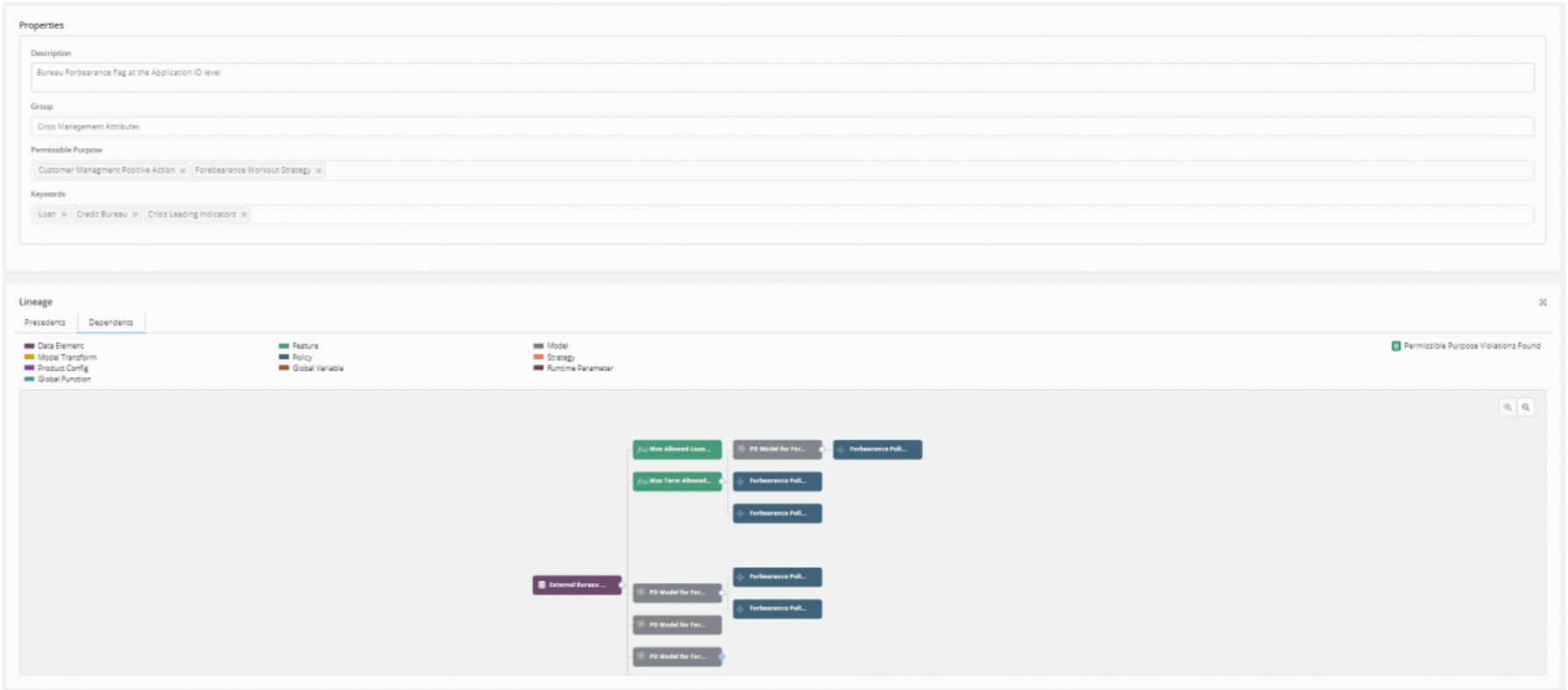

Authorized access to new data sources with controls: The Platform allow rapid incorporation of new data sources with the right level of controls for authorized use, while providing complete flexibility for modelers to leverage AI for innovation in risk modeling while enhancing governance and traceability. In the example below, the account level forbearance data from the bureau is registered and governed for permissible use in models for underwriting with full lineage traceability of use in downstream models.

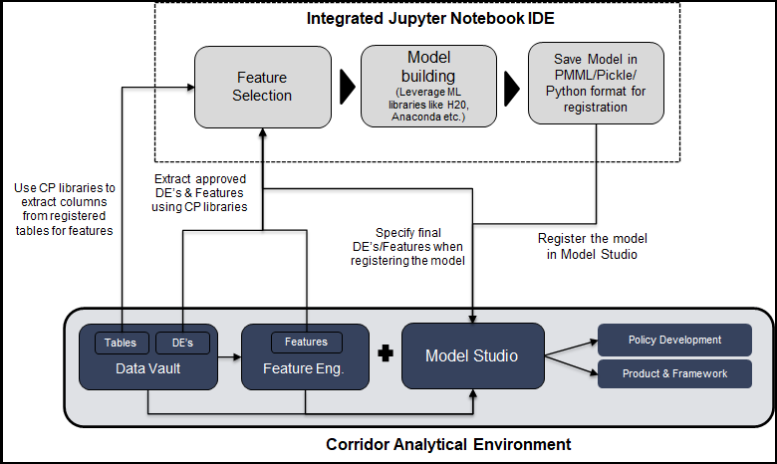

Integrate CP governed & connected data into the full cycle model development process:

Platform offers a rich library of routines that allow modelers to extract authorized data from Platform to their modeling sandboxes though python and then have complete flexibility to use powerful ML/AI libraries like Anaconda, H2O, PyTorch to build robust models leveraging big data.

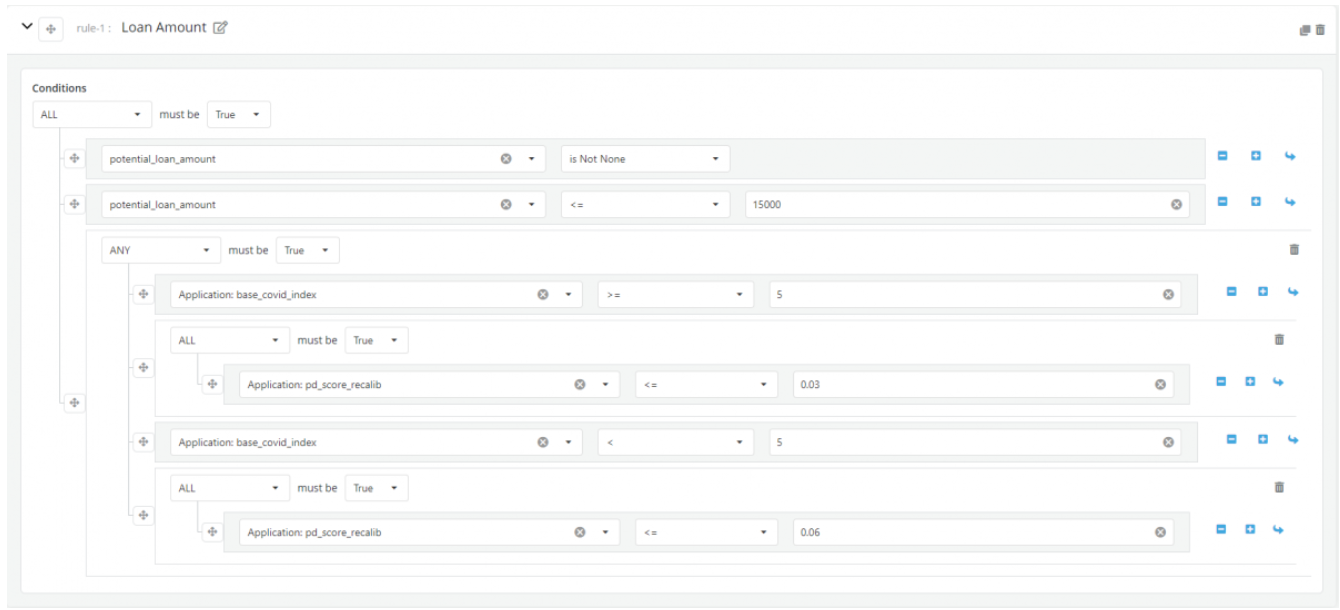

Rapidly develop and test new decision strategies using ‘English like’ rule writer: Platform supports rapid development of strategy overlay rules on model output using a strategy writer engine that is highly intuitive and easy for a policy writer to use. Strategy rules can be defined segment-wise or encompass all segments. In the example below, the score cutoff for default for high affected states by Covid-19 is tightened versus BAU, with an intuitive rule overlay on existing model scores.

Model Validation

Highly configurable model validation module with workflow automation to support efficient interaction: * Model validation module comes in two variants, * Model Validation – Single model, multiple validation datasets * Model Comparison – Multiple models, single validation datasets (ex: champion challenger scenarios)

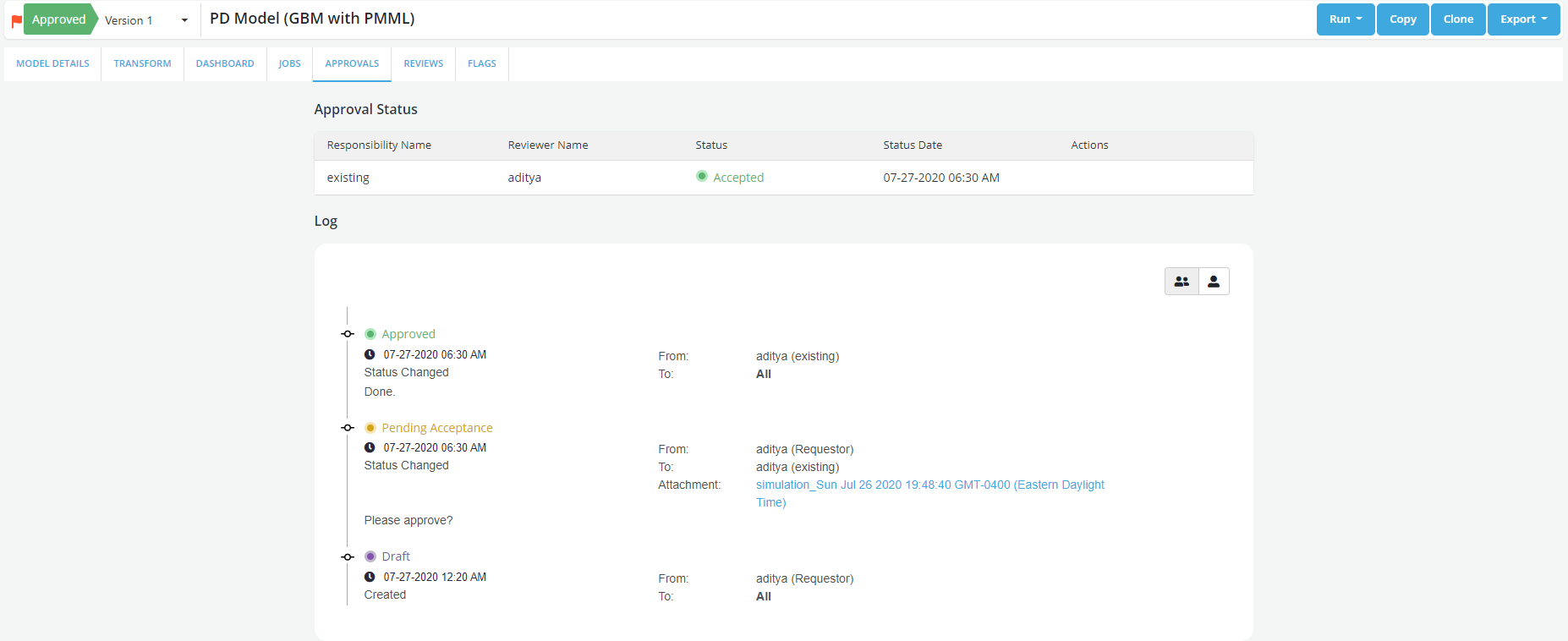

Model developer and reviewer can rapidly develop multiple scenarios for model validation (including extensive data segmentation), automate the development of standardized model performance metrics (ex: AUC curve, KS Tables etc.) and track the approval workflow process including multiple approval levels & audit trails through the platform. In the example below, the segmented validation for low FICO applicant base is lower than the benchmark and suggests that the modeler might need to put in some business rule overlays to address the issue. In addition, the following image shows the approval workflows audit engine which supports layered approvals with audit trails.

Moving Models from analytics to production & feedback loop

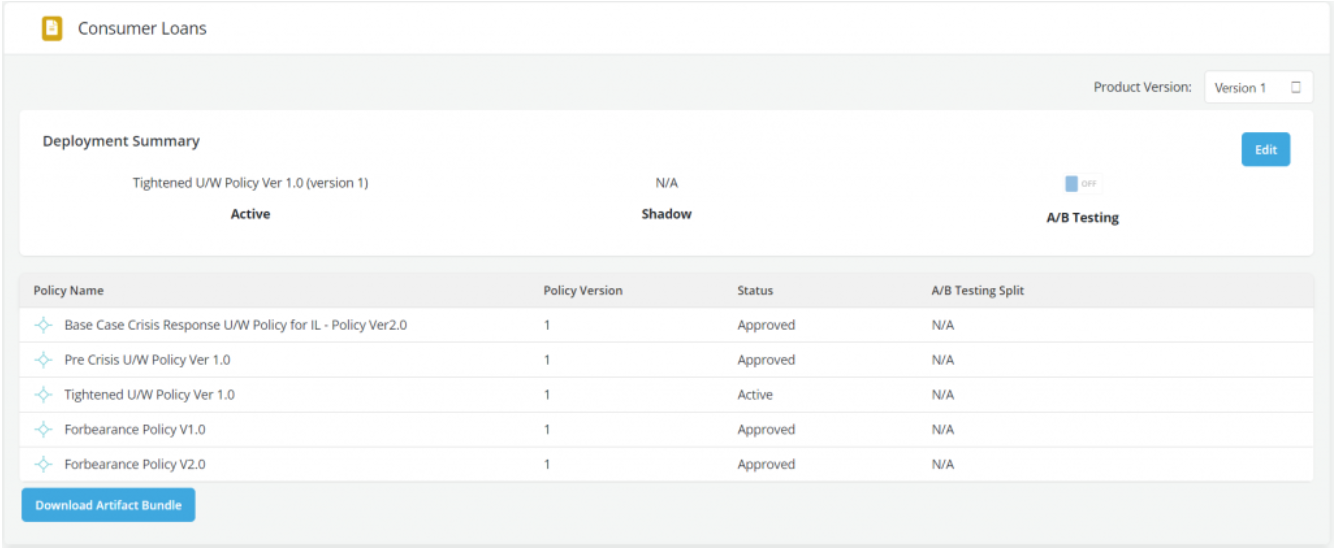

Decision artifact generation: CP automatically stitches together a standalone artifact that can be deployed seamlessly in the bank’s production systems. The artifact can score in batch (on Spark cluster) or real-time mode (on python) and exactly mirrors the logic developed during the analytic build process.

Enable rapid test-learn: CP offers automated workflows to measure impact of decisions based on models by allowing new performance data to be easily loaded and used for the measurement metrics which are standardized across model or product families.

Model Performance Monitoring

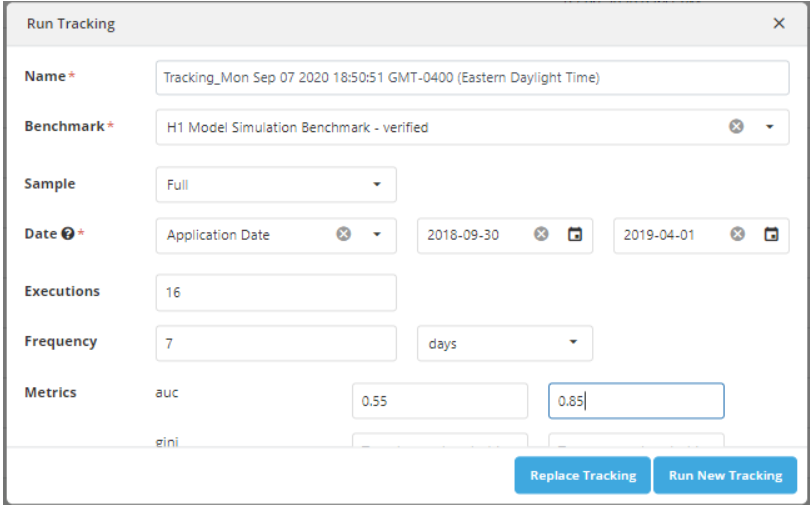

Automated model monitoring: Platform provides capabilities for banks to easily set up automated performance monitoring of models at pre-scheduled periods as well as define performance benchmark thresholds, which if breached, resulting in an exception trigger report to the model monitoring team. This automates the monitoring process and facilitates management by exception. In the example below 16 iterations for model tracking on a weekly basis is setup with floor and ceiling AUC (Area Under Curve) of 0.55 & 0.85 respectively.

Refer section on [Model performance monitoring post approval]setting-up-model-monitoring-performance-post-approval.md)for details

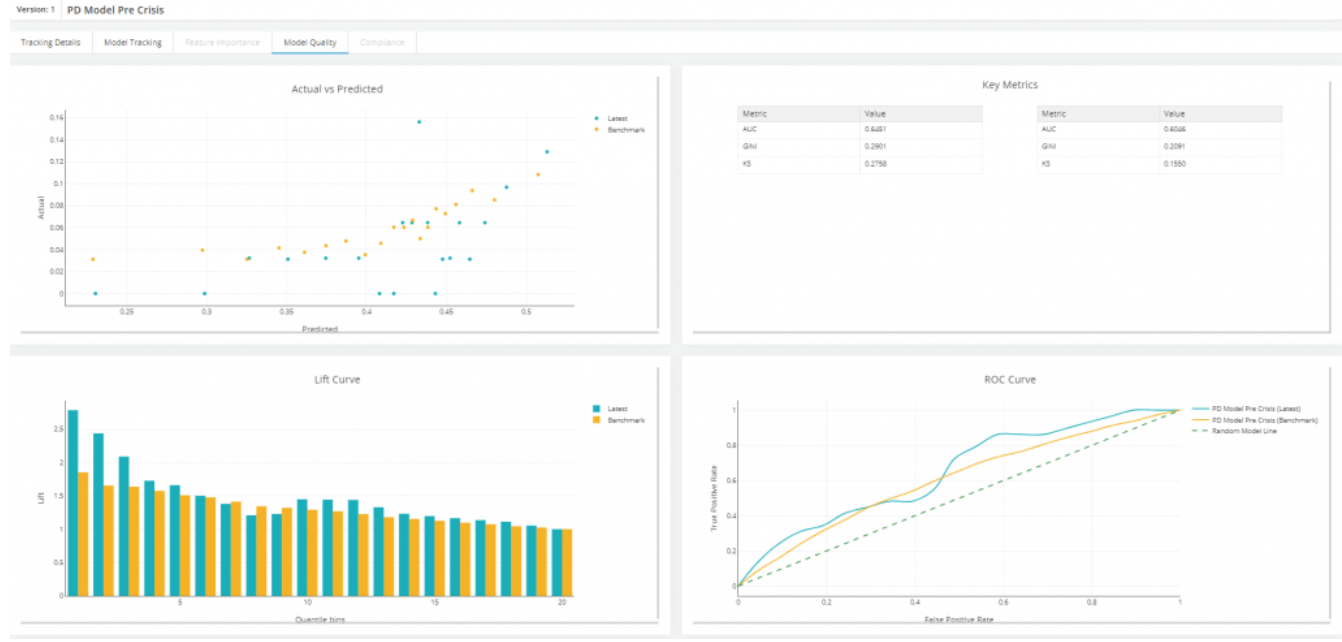

Segmental performance monitoring: Platform allows to easily setup and measure the segmental performance of BAU models to identify potential model ‘breaks’. In the example below, the model performance is unstable for the severe Covid-19 affected segment of customers by region (COVID severity cutoffs are based on COVID index defined at regional level).